Data Warehouse or Data Lake? The Path to Lakehouse and Data Mesh

Introduction

Every team wants faster insights without ballooning costs or vendor lock-in. The problem: legacy data warehouses struggle with new data types and speed, while pure data lakes can devolve into swamps without governance. This guide explains the differences, then shows how to combine the best of both with a lakehouse—and when an organizational model like data mesh makes sense. You’ll get practical steps you can apply this quarter.

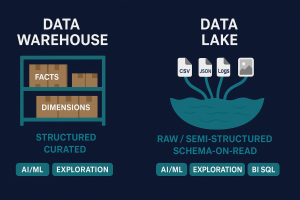

1) Data warehouse vs data lake—what’s the real difference?

Data warehouse (DW)

-

Optimized for structured, curated data and BI.

-

Strong schemas (star/snowflake), governed transformations, fast SQL.

-

Typically batch-oriented; slower to onboard novel sources.

Data lake (DL)

-

Stores raw/semi-structured/unstructured data at low cost.

-

Great for AI/advanced analytics; schema-on-read flexibility.

-

Needs governance to avoid duplication, drift, and unclear ownership.

Quick rule of thumb

-

KPI-driven reporting with strict definitions → DW patterns shine.

-

Diverse sources, ML/RAG, rapid onboarding → DL patterns shine.

-

Most organizations benefit from a lakehouse that blends both.

2) Lakehouse: unify flexibility and governance

A lakehouse keeps data in open formats on object storage but exposes warehouse-like ACID tables and performant SQL.

Core building blocks

-

Open tables on object storage: Parquet + Iceberg/Delta/Hudi.

-

Ingestion: Change Data Capture (CDC) for real time + scheduled batch.

-

Governance: unified catalog, lineage, data quality, RBAC/ABAC.

-

Compute: SQL engines for BI, notebooks for DS/ML, streaming for freshness.

-

Security: encryption, network isolation, auditability.

Why it wins

-

One platform for BI & AI (dashboards, forecasting, RAG).

-

Open formats → lower lock-in and independent scaling of storage/compute.

-

Real-time ready with CDC and streaming transforms.

3) Data mesh: scale through domain ownership

Data mesh is an operating model, not a tool. It moves ownership to business domains (Finance, Ops, CX) and publishes data products with clear contracts.

Principles to know

-

Domain ownership: teams closest to the process own and serve their data.

-

Data as a product: documented, discoverable, with SLOs and versioning.

-

Self-serve platform: shared ingestion, storage, catalog, security.

-

Federated governance: central policies; domain autonomy within guardrails.

When to consider mesh

-

Many domains and cross-functional data needs.

-

Central team is a bottleneck.

-

You need autonomy without losing compliance.

4) A practical path (6 steps you can start now)

-

Define outcomes & guardrails

-

2–3 priority use cases, data sensitivity, SLA/latency, access model.

-

-

Stand up the lakehouse foundation

-

S3-compatible object storage + Iceberg/Delta tables, unified catalog, role-based access.

-

Zones: Bronze (raw) → Silver (validated) → Gold (analytics-ready).

-

-

Ingest smart: CDC + batch

-

CDC for operational sources needing freshness; batch for slower systems/files.

-

Validate, deduplicate, track lineage; automate schema drift alerts.

-

-

Serve BI and AI from the same data

-

SQL views for dashboards; notebooks and vector indexes for AI/.

-

Enforce row/column-level security; cache hot queries.

-

-

Bake in observability

-

Monitor data quality, job SLAs, query latency, access logs.

-

CI tests for pipelines and table contracts before publish.

-

-

(Optional) Evolve to data mesh

-

Assign domain owners; publish data products with contracts and SLOs.

-

Keep a central governance council to align policies and metrics.

-

5) Decision checklist—what should you build first?

-

Need immediate reporting wins? Start with a lakehouse exposing Gold views.

-

Heavy AI/ML ambitions? Emphasize open formats + notebooks + vector search from day one.

-

Multiple autonomous teams? Pilot mesh with 1–2 domains on top of the lakehouse.

-

Concerned about lock-in/cost? Favor open table formats and portable engines.

Summary & Call to Action

-

DW vs DL: warehouses excel at governed reporting; lakes unlock flexibility for AI.

-

Lakehouse: combine both—open formats, governance, and performance.

-

Data mesh: scale delivery through domain ownership with central guardrails.

Want a secure, open lakehouse (and a roadmap to mesh) without vendor lock-in? Contact X14, book a demo, or read more about how we deliver real-time analytics and AI on your terms.